In Incremental Load in QlikView – Part 1 we saw Incremental load with Insert Only option.

In this session, we will see how to do Incremental Load with

– > Insert and Update

– > Insert, Update and delete

Insert and Update

Incremental Load with Insert and Update is similar to “Insert Only” (see my earlier blog Incremental Load in QlikView – Part1)

In this type of Incremental Load you will take new rows from the database and add/append to the existing QVD.

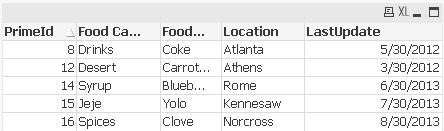

In our previous excercise, we have loaded, the following rows/table in QlikView QVD

For this example, i will add 2 new rows into my existing database table

Now our objective is to load only 2 new rows and not to overwrite the entire table

1. We will start with the inital load

2. Incremental load i.e. load new rows based on the LastUpdate date

3. Concatenate. Here you will check for PrimaryKey and load only those rows where PrimaryKey is new.This will ensure that only new rows are added and existing rows are not overwritten

Insert, Update and delete

This option is similar to Insert and Update with only exception that you need to exclude/delete records that have been deleted from the source system.

To explain my point, i have deleted a row and added a new row in my database table. I have deleted the row where PrimeId = 6. I have added a row where PrimeId = 16. Now my database table looks like

Now using Insert,Update and delete , you should be able to load the new rows and exclude the ones which are deleted in the source system and store the result into qvd.

Follow the same steps as above with Initial load,Incremental load,concatenate and then use Inner join script to exclude records that are deleted from the source system.

After executing this load, you will see that new rows are added to your qvd and the row which was excluded from the db is deleted from your qvd.

If you select from the Qvd and create a Table box, it will look like the following, your qvd deleted the record for PrimeId = 6 and added a new record where PrimeId = 16

Incremental loads are very useful when you have to load large transactional tables frequently and maintain the data integrity.

Further readings: quickintelligence

Hi Chandra,

Nicely explained. Very helpful. Appreciate your efforts in sharing your knowledge and helping all of us out.

Cheeers!

Pritam

How can i get not exist data when im using SQL server to get data

Damitha:

Not Exists is a QlikView function which you use as part of your Load script. Load command allows you to use QlikView specific functions.

So it doesn’t matter if you are using SQL Server or Oracle or any other database.

Hope this helps.

Hi,

I started Qlikview preparation recently. able to understand. can you kindly explain what type of work will be assigned to a qlikview developer in real time and what topics need to be stressed more while preparing for interviews

Vijetha:

There are 2 parts to QlikView viz. QlikView designer and QlikView developer.

QlikView designer is responsible for creating dashboards,graphs and tables using the underlying data model. QlikView designer should apply best practices of dashboard designing.

QlikView developer is responsible for creating data model. Working on load scripts etc.

These roles always overlap. QlikView developer may do dashboard designing as well.

In my experience, a wholesome QlikView practitioner should have knowledge of creating data models and designing dashboards.He/She should also have exposure to QlikView server so that he can deploy dashboards on the server.

Hi Chandra,

can we update a record in qvd file. as per your example above for Insert and Update it is only inserting new records without overwriting the existing records. But if for eg any column value is updated for an existing row then that will not reflect in the qvd file. Is there a way around this?

Abishek:

If the row has changed in the database that means it will be stored in the database with a new lastupdatedate and therefore will be treated as a new record.

Hello Chandraish,

I have two question with respect to this post.

1. Since my data source is excel, the updation in excel file is not getting reflected in qlikview when I go for insert & update method

2. How do I use SQL SELECT statement for excel table for doing inner join in ‘insert, update & delete’ method.

Sunil:

As mentioned in my blog https://www.learnallbi.com/incremental-load-in-qlikview-part1/

Incremental load requires the use of QVD files.

If you are using an excel file, convert that into a QVD file and then try the example.

Also SQL Select is a db statement so it will work only if you are connecting to a database table.

Hi

Just curious to know is it possible to do incremental load with using qvds in Qlikview.

Regards

Kiran Kumar

Kiran:

Incremental load is possible only by using QVDs

Hi Chandra,

i try an incremental load with Oracle, it’s ok.

But the Exists function accept only 2 key Fields.

My table have 3 key fields.

I tried to create a composite key field on loading, but exists accept only real fields present on table.

Do you have some other suggest ???

Thanks

have a small query. Hope you can help me on this.

I have three different sources which I get as QVX file( Source1.qvx source2.qvx source3.qvx). I’m directly importing this qvx files into the qlikview. In these three sources I have common variables Year, Month , Day. Since Source1 is my main data. I created a drop down the qlikview for user selection. I’m looking for global variable when user selects year , month, day from the source1 the selection must be pass as parameters(year, Mon,Day) to source2 and source3 inputs.

Sources: Source1, Source2, Source3

Parameters to be passed : Year, Month, Day

From: Source1 To: Source2,Source3

Data: In source1

Year Month Day

2015 Jan 1

2015 Jan 2.

………..

………….

Could you suggest me solution this please.